UX and UI Trends to Watch for in 2021

Users are swamped with information, both textual and visual. It only takes milliseconds for a customer to decide whether or not your website or mobile app is worth their time. We take a look at the UX and UI design trends that are crucial aspects of gaining and keeping their attention.

But, as technological innovations continue to surface, evolve and gain traction (e.g., AI, biometrics, IoT, 5G, Virtual Reality, Augmented Reality, serverless computing, etc.), UX and UI design will need to shift as well. That being stated, below you’ll find our assessment as to where the UX and UI industry is headed in 2021.

Accessibility Will Always Be #1

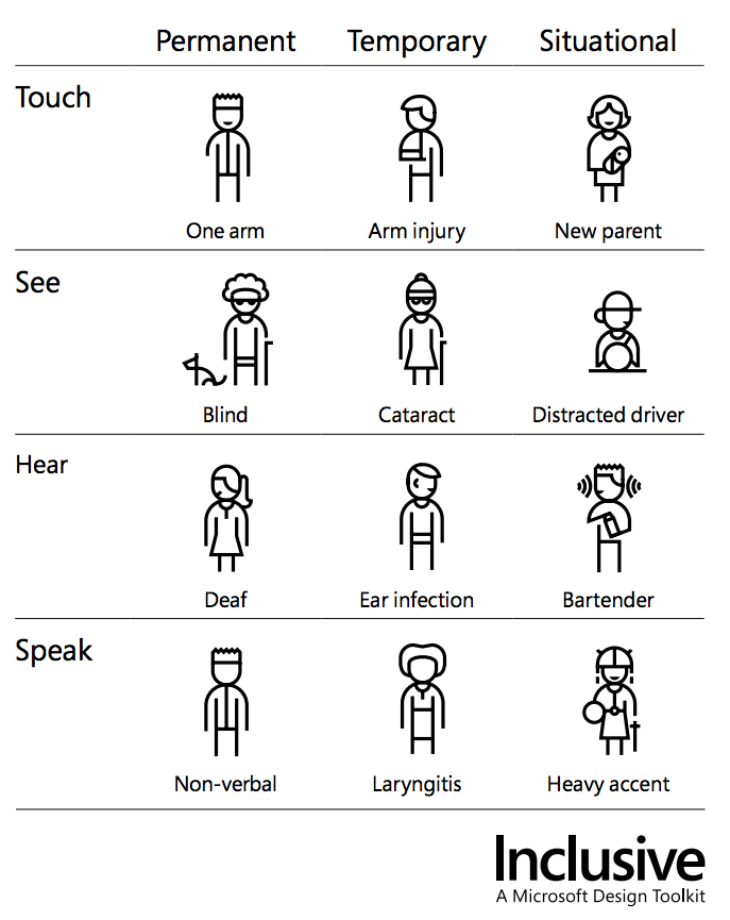

Regardless of the new gadgets, software, algorithms, or hardware that the tech world introduces, accessibility will continue to reign supreme. So, all UX and UI design must merge with edge use cases that center on sight, touch, and sound.

For example, despite the marketers’ focus on the younger generations, the digital age increasingly requires the older generations to perform daily interactions via digital means.

As we age, our sensory faculties are apt to decline in one way or another. Thus, it would be unwise to ignore the needs of this population — still one of the largest, collectively — when it comes to designing accessible UX/UI schemas.

With this in mind, we’ve categorized the UX and UI trends for 2021 via their sensory touchpoints.

Visual Accessibility: Animation, Color Schemes, and Augmented Reality

Up to 10% of the world’s male population has the Red-Green form of colorblindness. Furthermore, according to the Community Eye Health Journal, incidences of cataract development increase by one million people each year, worldwide.

On the surface, the statistics may seem to encompass only a small portion of the global population. However, we’re talking billions of people who may not be able to see your website or mobile app clearly. This is further supported by the ever-increasing use of smartphones worldwide (which will comprise over half the world’s population by 2021).

Several of the UI/UX trends center on animation, bold color schemes, 3-D graphics, Augmented Reality, and minimalist design. As such, before adopting any of these visual elements, make sure you incorporate optical aspects that are also accessible to the sub-populations within your target audience.

Examples include focusing on text rather than color for conveying essential information, testing the contrast ratio for optimal typography and color, and crafting readily visible focus states.

As Augmented Reality, and other advanced functions, becomes more prevalent, accessibility considerations are likely to increase in complexity. Consequently, the demand for UX and UI designers has a high likelihood of increasing as design requirements become more sophisticated.

Tactile Touchstones: Gesture Control

Although we initially rely on what we can see as a primary information-gathering tool, we’re also highly tactile. This is reinforced by all of our handheld digital devices, e.g., mobile phones and tablets, etc.

We also have a tendency toward busy-ness and trying to do more than one thing at a time. Although there is substantial research that supports our inability to truly multitask (we’re essentially attentional switching and not actually “multitasking”), we’re still prone to having our mobile phones in one hand while trying to do other things (hopefully, you’re not trying to drive a vehicle).

New parents perfectly illustrate the “one-handed need to find something quickly on my phone” activity. But, also relevant are those who’ve sustained an injury that limits — or completely inhibits — their tactile navigation capabilities.

The tech industry has recognized these and other populations such as service personnel that need the use of both hands to perform a task. As a result, gesture control is a growing implementation for the various digital systems that we interact with on a daily basis (it’s also known as “natural user interface”).

Accordingly, Google is finalizing its motion sensor development for the Pixel 4 phone. While you’ll still need one hand readily available to make the appropriate gestures that the algorithms can recognize, it’s a step towards complete, touchless interaction with the digital world.

In terms of UX/UI design and implementation, this presents an intricate approach that presses the industry towards further individualizing the reciprocal flow between the user and the design schema. Too many gesture control features will overwhelm the user. Too few will frustrate them in equal measure. For this reason, incremental design and usability testing are particularly crucial for gesture control mechanisms and, ideally, subject matter experts in accessibility should be consulted.

The Sonics of Siri and Other Voice Controls: Can You Hear Me Now?

Natural language processing (NLP) is an AI sub-field that is the source for the likes of Siri and other virtual assistants. Since we’re saturated with words in both written and verbal formats, it’s easy to lose sight of (or completely ignore) the trickiness of language: nuance, tone, connotation vs. denotation, local vs. national dialects, formal vs. informal language, etc.

Such is one of the reasons that our AI and machine learning algorithms haven’t been as fast in their language recognition fluency when compared to image recognition and classification (there’s still much work to do here as well). In the past, the speech to text on our phones has been notoriously wonky, and sometimes ill-fated (in many languages, if a word is missing one letter, the friendly message immediately shifts to something possibly more insulting).

But, there are other accessibility issues to consider: heavy accents, illness such as laryngitis, background noise (heavy machinery or a crowded restaurant), etc. As indicated above, we’re quickly moving towards an environment where we “organically” communicate with digital systems.

In terms of UX/UI design for verbal communications, right now it’s more about trying to capture the many possible meanings of the speaker, and then attempting to “understand” which way to offer corrections about what they actually meant to say.

For now, much of the voice recognition and speech to text refinement rests in the hands of the machine learning and AI experts. But, eventually, we’ll have voice control over the visual elements that we want to see (speaking of individualization), and UX/UI designers will be the go-to experts for melding accessible design with voice recognition and accurate system responses.

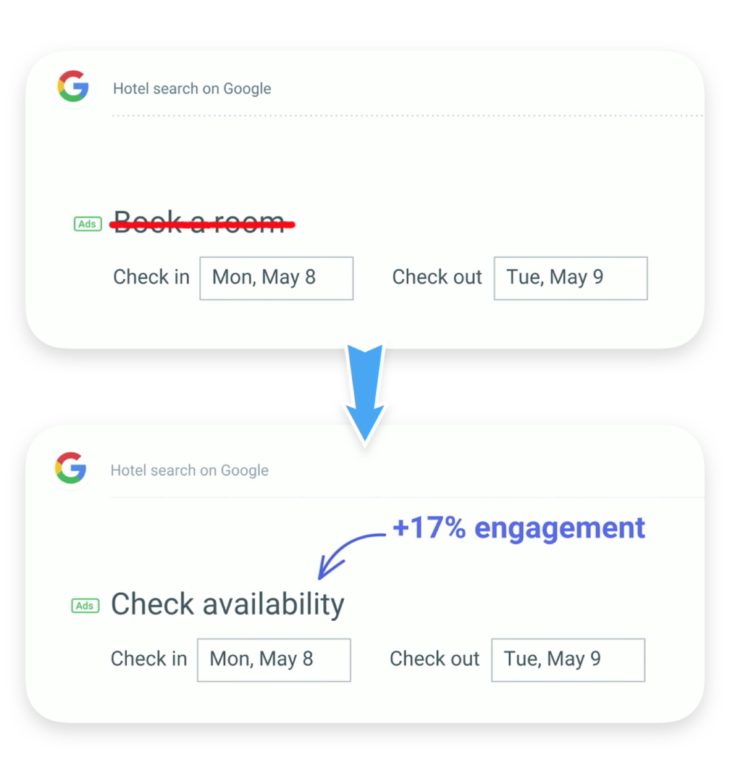

The Rise of the UX Writer: Clear Microcopy for the Win

While visual design is important, you’ll still need clear written communications that match the tone, style, and objective of your UX/UI design. When swapping out a single word can either boost customer conversions or their bounce rates, the importance of expert writing — specific to UX — should not be underestimated.

Enter the UX writer. Per Google Trends, the search for UX writer jobs appeared on the scene in April of 2012. But, over the past seven years, as the digital age quickly escalated via social media and mobile apps, the need for concise, useful, and coherent copy has increased dramatically.

Constructing UX content for digital readers is not an innate skill. Microcopy is almost like poetry, except far less ambiguous. Then there’s typography, tone, and finding the right “meta tags”. There’s a science to it (with doses of creativity). Indeed, it’s a skill that is separate from (though related to) UX/UI design.

Thus, it’s not surprising that Google, Amazon, Dropbox, and numerous other major corporations have embraced UX writing as a distinct job function (and UX writers can earn over $130,000 per year).

For most enterprises, this is far too much work for a UX/UI designer to handle in addition to their responsibilities. So, to ensure that the text and the design are both compelling and clear, UX writing and UX/UI design should be distinct workloads that merge through a collaborative effort between the design and writing experts.

Chatbots: Friend or Foe?

To save on labor costs and streamline as well as individualize the customer experience, chatbots are quickly popping up on mobile apps and websites throughout all industries: Pizza Hut, Lyft, Whole Foods, and Spotify are just a few of the name brands who’ve implemented B2C chatbots. This is the realm of both the UX/UI designer and the UX writer.

UX/UI design for chatbots replicates the user design and accessibility factors already discussed. The troublesome component for the UX/UI designer and UX writer stems from the NLP issue of “misunderstanding nuances of the human language” which leads to “too many unhelpful responses.” The UX writer, in particular, is tasked with determining what constitutes a “helpful response” within the context of what the user wants to achieve. But, this can mean many things even if they are constrained to simply ordering a pizza.

However, the UX/UI designer can assist with the design and deployment of visual cues (e.g., pictures, video, etc.) that can pop up in the chat box. Another UX/UI design-focused feature would be to create chatbot personalities while also ensuring that there is a quick “escape route” for the user (before they become utterly disgruntled with the feature).

For now, chatbots are still in the emerging technological phase. But, as AI improves its NLP fluency, and their “helpfulness” accuracy increases, we’re likely to see widespread use for numerous low level tasks (and eventually tasks requiring more complexity).

The UX and UI trends beyond 2021

The tech industry is going to continue to do what it does best: innovate. While the mild panic regarding AI taking over everyone’s job (save for a few) has some nugget of truth beneath the frenzy, we’re still quite a way off from an AI UX/UI designer who understands humans in the ways that being human reinforces. So, in our striving to get ahead of the trends — or create the next remarkably intuitive UX/UI environment — human accessibility, in all its diversity, should always take center stage no matter what we are building.